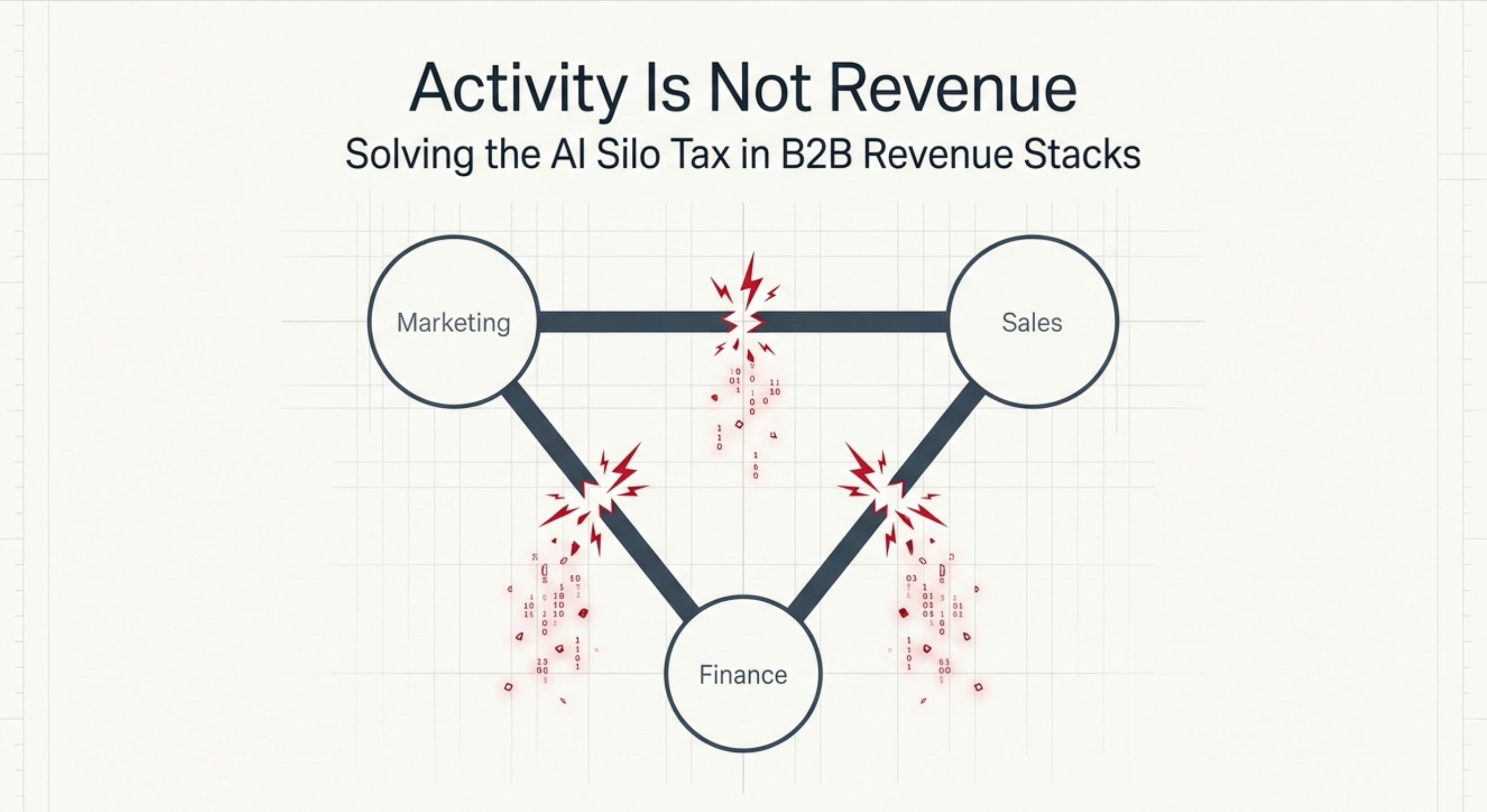

Most AI tools fail to generate revenue for a single reason: they operate in isolation. The content AI, the prospecting AI, and the CRM generate activity: emails sent, meetings booked, sequences completed, but none of them trigger action in the others. Gartner research finds this data-silo pattern affects 67% of B2B companies, costing 10–15% of potential revenue. We call this the Silo Tax. The fix is not another tool. It is a System of Action, a connected architecture where signals automatically trigger revenue behavior.

The Revenue Meeting That Explains Everything

October. Q3 results. The RevOps leader at a $45M SaaS company presents to the leadership team.

Marketing reports 1,847 MQLs, a record quarter. Sales reports a record quarter of poor-fit leads. Finance reports declining win rates. The CFO asks the question nobody has a clean answer to: why hasn't the AI spend approved in January shown up in the revenue numbers?

The RevOps leader knows exactly what happened. The content AI, the prospecting AI, and the CRM are three separate systems. Each one generated an activity. None of them knew what the others were doing. The Q3 report shows the number of emails sent, meetings booked, and sequences completed. The revenue number tells a different story.

This is not a story about tools that didn't work. Every tool did what it was built to do. It's a story about an architecture that was never designed to connect them.

What the Silo Tax Actually Costs

The Silo Tax is the measurable cost of AI experiments that add rework rather than accelerate revenue. It shows up when your marketing team, your sales team, and your finance team each pull a different revenue number from the same quarter, because they're each looking at a different system, none of which shares data with the others.

The cost is not abstract. Gartner's 2024 research found that companies with inadequate marketing-sales alignment lose 10–15% of potential revenue, and that in 67% of B2B companies, marketing and sales teams either can't access the same customer data or access it through different systems.

That's not an edge case. That's the default operating state for most mid-market companies running multiple AI tools.

When Marketing Reports Record MQLs and Sales Reports Poor-Quality Leads

The attribution conflict is the most visible symptom. Marketing's attribution model shows a record lead quarter. Sales' CRM shows a record quarter of leads that didn't convert. Both teams are right inside their own system. Neither system talks to the other.

Gartner's research found that in companies with this fragmentation pattern, 61% of marketing-generated leads are never followed up by sales teams. The leads exist in one system. The follow-up obligation is supposed to happen in another. The handoff falls through the gap between them.

When the AI Is Busy, and the Revenue Number Isn't Moving

The second pattern is subtler and more expensive. The AI tools are running, sequences are being sent, content is being published, agents are qualifying inbound, but the revenue number isn't moving in proportion to the activity.

A 2025 MIT study of 300 enterprise AI deployments found that U.S. businesses invested $35–$40 billion in internal AI projects, yet 95% saw zero measurable return on investment or no measurable P&L impact. McKinsey's 2025 State of AI survey of 1,993 organizations found that only 39% report any measurable impact on EBIT from AI, and most of those say AI accounts for less than 5% of EBIT.

Near-universal adoption. Rare impact. The gap between those two facts is the Silo Tax operating at scale.

Who This Affects: The AI Transition Operator

The Segment 2 operator is not a company that missed the AI wave. They caught it. They bought the tools, allocated the budget, and ran the pilots. The problem is what happened next.

Many of these companies started where the pipeline was going dark in growing companies, with no system at all, no CRM, and revenue running on email threads and institutional memory. They solved that problem. They bought tools to solve the visibility gap. Then they bought more tools to solve the follow-up gap. Then, a content tool, a prospecting tool, and a reporting tool.

Each purchase was the right decision at the time. The accumulation is the problem. If the previous challenge was not being able to see the pipeline, the current challenge is being able to see it but not being able to act on what you see, because the tools that generate signals don't connect to the tools that should trigger action.

The persona this article is written for is the VP of Marketing who is tracking engagement but not revenue. The Head of RevOps manages integration debt rather than generating insights. The CTO or Head of Growth who approved multiple AI tools and is now fielding questions from the CFO about where the return is.

Five Signs Your AI Is Producing Activity, Not Revenue

These five patterns indicate a Silo Tax problem rather than a tool quality problem:

- Your marketing team and your sales team cite completely different numbers when asked what revenue AI contributed last quarter.

- Your AI sequences are sending at high volume, but 61% or more of the leads they surface are never followed up on, because the follow-up system is manual and downstream.

- Your reps are saving time through AI automation; HubSpot's 2025 research found that 64% of reps save 1–5 hours per week, yet quota attainment is flat or declining despite these efficiency gains.

- Your CRM contains data your agents could act on, but they don't have access to it. Salesforce's 2024 State of Sales report found that sales leaders estimate 19% of their company's data is inaccessible to AI systems.

- Your CFO is asking the same question as the one in the October meeting above, and you don't have a board-presentable answer.

If three or more of these describe your current state, the issue is architectural, not operational.

Why the Architecture Is the Problem, Not the Tools

The tools are not broken. That's the important thing to understand before trying to fix the Silo Tax. Each tool was designed to do something specific, and each one is doing it. The problem is that they were designed as standalone instruments, not as an orchestrated system.

McKinsey's 2025 research identified the single factor distinguishing high-performing AI organizations from the rest: high performers are 2.8x more likely to report fundamental workflow redesign around AI rather than layering AI onto existing processes. Companies that only layer tools get what McKinsey calls "micro-productivity", marginal time savings that don't compound into revenue outcomes.

That's the mechanism. Tool layering produces isolated efficiency. Connected architecture produces revenue.

What a System of Action Does That Your Current Stack Does Not

A system of record stores what happened. A System of Action responds to what is happening.

Most mid-market AI stacks are built on top of a system of record. The CRM captures the meeting. The AI sends the sequence. The rep checks the dashboard. Every step requires a human to advance the deal. The system waits.

A System of Action continuously sweeps for revenue signals. When a prospect's behavior indicates readiness- a cluster of intent signals crossing a threshold- the system triggers the next action automatically, without waiting for a rep to notice. The human enters the workflow at the decision point, not at every administrative step between signals.

Why 81% AI Adoption Coexists With 67% Quota Miss

Salesforce's 2024 State of Sales data captures the contradiction precisely: 81% of sales teams are experimenting with or have fully implemented AI. Simultaneously, 67% of reps don't expect to meet their quota. And 51% of sales leaders say tech silos delay or limit their AI initiatives.

Adoption is not the problem. Connection is.

Connected vs. Disconnected, The Decision Table

|

If your current state is... |

What does it cost you |

What a connected architecture changes |

|

Marketing and sales cite different revenue numbers from the same quarter |

Attribution conflict; CFO cannot verify ROI; board approval for AI spend becomes faith-based |

Single Revenue Intelligence layer; one number, one source of truth, board-presentable |

|

AI follow-up depends on a rep acting on a notification |

61% of leads never followed up; first-responder advantage lost to competitors with automated triggers |

Signal-triggered action; the agent advances the deal state automatically when prospect's behavior indicates readiness |

|

AI tools measure activity (emails sent, meetings booked, sequences completed) |

ROI is immeasurable; CFO sees spend without return; technology budget questioned |

Cost per Outcome replaces activity metrics; the board sees dollar-denominated proof of AI contribution |

|

19% of your CRM data is inaccessible to your AI systems |

Agents work from a partial data picture; recommendations are incomplete; context is lost at handoffs |

Unified data model; every agent, autonomous or human, works from the same persistent record |

|

AI adoption is high; quota attainment is flat or declining |

Silo Tax: efficiency gains stay local to each tool, don't compound into revenue |

Workflow redesign; efficiency gains from each tool feed the next stage; the system acts on a compound signal |

The CETDIGIT Perspective: What Connecting the Stack Actually Requires

The architectural gap has three layers. Closing it requires addressing all three in order.

The first is the data-sharing gap. In 67% of B2B companies, marketing and sales cannot access the same customer data. Before any agent can act intelligently on a revenue signal, the underlying data has to be unified. That means a single data model connecting CRM, marketing automation, and intent data, not periodic exports, not weekend reconciliations.

The second is the orchestration layer. A unified data model is necessary but not sufficient. The data has to trigger action. A System of Action replaces the manual handoff: rep checks the CRM, decides on the next step, and executes, with an architecture that continuously monitors signals and automatically triggers the appropriate response. The human enters when judgment is required. The system handles everything before that point.

The third is the measurement layer. Revenue Intelligence replaces activity reporting. Instead of showing emails sent and meetings booked, the measurement layer shows which signals preceded closed revenue, the Cost per Outcome for each action type, and where in the Revenue Graph the next opportunity sits. The CFO gets a number tied to a specific action, not a vibe.

This three-layer architecture underpins CETDIGIT's AI services framework. It is not a tool recommendation. It is a structural requirement for any mid-market company trying to move from AI adoption to AI revenue.

Your Next Step: The Stack Unification Audit

The Stack Unification Audit is a diagnostic of where your AI investment is leaking and what it would cost to connect it to revenue.

It starts with the data layer, mapping what your tools know and what they can't share. It moves to the orchestration layer to identify where human handoffs break the signal chain. It ends with a Revenue Intelligence baseline, which connected measurement would actually show the board.

The primary architectural destination for companies going through this process is the AI Revenue Engine, the connected architecture that replaces fragmented AI experiments with a unified system of action. For companies whose primary gap is at the platform execution layer, the CETRAI orchestration platform handles the agent-to-CRM connection that enables the Revenue Engine to operate. A Stack Unification engagement typically clarifies which layer needs work first.

Frequently Asked Questions

If AI is saving my reps 1–5 hours per week, why isn't it moving the revenue number?

Time savings are local. HubSpot's 2025 research found that 64% of reps save 1–5 hours per week through AI automation, but those savings remain within each tool. The rep saves time on email drafting. That saved time doesn't automatically feed a better-qualified signal to the CRM, which doesn't automatically trigger a faster follow-up. Each efficiency gain is real and stays siloed. Revenue moves when efficiency gains compound across a connected system, not when they accumulate separately inside individual tools.

What is the Silo Tax, and how do I calculate it for my company?

The Silo Tax is the measurable cost of AI experiments that add rework rather than accelerate revenue. Gartner's 2024 research quantified the baseline: companies with marketing-sales data silos lose 10–15% of potential revenue. For a $50M company, that's $5–$7.5M in unrealized revenue annually, not from a tool failure, but from a connection failure. To estimate your own Silo Tax, start with how often your marketing and sales teams cite different revenue numbers from the same quarter. That attribution gap is the most visible evidence of the cost.

Why do 95% of AI pilots fail to produce measurable ROI?

The MIT NANDA Initiative's 2025 study of 300 enterprise AI deployments found that 95% saw zero measurable return on investment. The distinguishing factor in the 5% that succeeded: they picked one specific pain point, executed well, and connected the AI output to a downstream revenue action. The majority of failing pilots deployed horizontal AI tools, copilots, and chatbots that spread marginal efficiency across many employees without concentrating it in any measurable revenue outcome. The tool wasn't the failure. The architecture was.

Is this a tool problem or a data problem?

It's an architectural problem with both a tool layer and a data layer. The tools are usually functional. The data is often accessible. The issue is that the tools don't share the data, and the data doesn't trigger action. Salesforce's 2024 research found that 19% of a typical company's CRM data is inaccessible to AI systems, not because it doesn't exist, but because the connection between the data store and the agent layer was never built. Fix the architecture, and the tools you already have start producing different outcomes.

What is a System of Action, and how is it different from a CRM?

A CRM is a system of record; it captures what happened after a human decided to record it. A System of Action responds to what is happening without waiting for a human to advance the deal. When a prospect's behavior crosses a signal threshold- a cluster of intent indicators firing in parallel- a System of Action triggers the next revenue action automatically. The human enters the workflow at the judgment point. Everything before that is handled by the system. Most mid-market AI stacks are built on top of a system of record. The System of Action is the architectural layer above it.

How long does it take to connect a fragmented AI stack?

In CETDIGIT's client engagements, the diagnostic phase, which identifies where data-sharing gaps and handoff failures are concentrated, typically runs for two to four weeks. The connection work varies by stack complexity. Companies running HubSpot and Salesforce as separate systems with manual handoffs between them can see the first automated signal triggers within six to eight weeks of beginning a structured Stack Unification engagement. The timeline is less about the tools and more about how cleanly the underlying data model can be unified.

Stack Unification Audit

Find out exactly where your AI investment is leaking, and what it would take to connect it to revenue. A Stack Unification Audit is a diagnostic tool for identifying where your tools are breaking the signal chain and what a connected architecture would change in your Revenue Intelligence picture. Book a Stack Unification Audit.

Leave a Comment